Recurrent Neural Networks (RNNs) are a class of deep learning models that excel at processing sequential data, such as time series or natural language. In this blog post, we will discuss the architecture of RNNs, their key components, and their applications.

What are Recurrent Neural Networks?

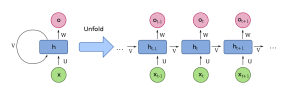

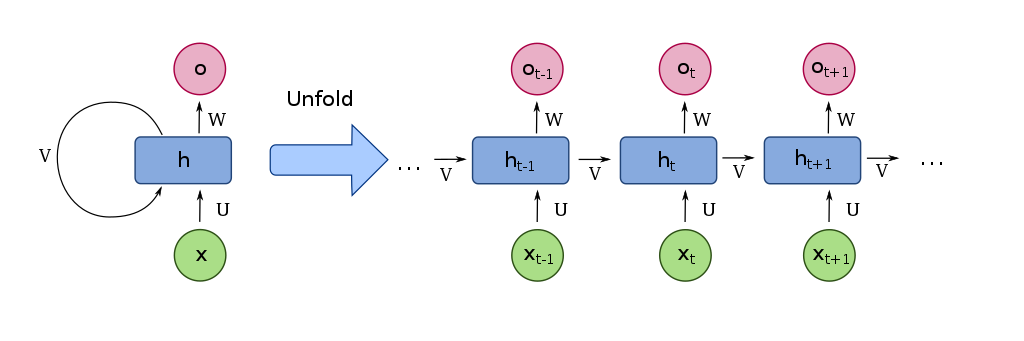

- RNNs are a type of neural network designed to handle sequential data by maintaining a hidden state that can capture information from previous time steps.

- They use loops to allow information to persist across time steps, enabling them to learn patterns and dependencies in the input sequences.

Key Components of RNNs:

- Hidden State: A dynamic internal memory that stores information from previous time steps, allowing the network to capture temporal dependencies.

- Input and Output Gates: Mechanisms that control the flow of information into and out of the hidden state, ensuring that relevant information is retained and irrelevant information is discarded.

Applications of Recurrent Neural Networks:

- Natural Language Processing: Tasks such as sentiment analysis, text generation, and machine translation benefit from RNNs’ ability to capture context and dependencies within text data.

- Time Series Forecasting: RNNs can model complex temporal patterns in time series data, making them suitable for tasks like stock market prediction or weather forecasting.

- Sequence-to-Sequence Learning: RNNs can be used for tasks that involve mapping input sequences to output sequences, such as speech recognition or video captioning.

0 Comments