Neural Networks form the backbone of Deep Learning, driving advancements in artificial intelligence across various domains. In this blog post, we will discuss the core principles of neural networks, their architecture, and their applications.

What are Neural Networks?

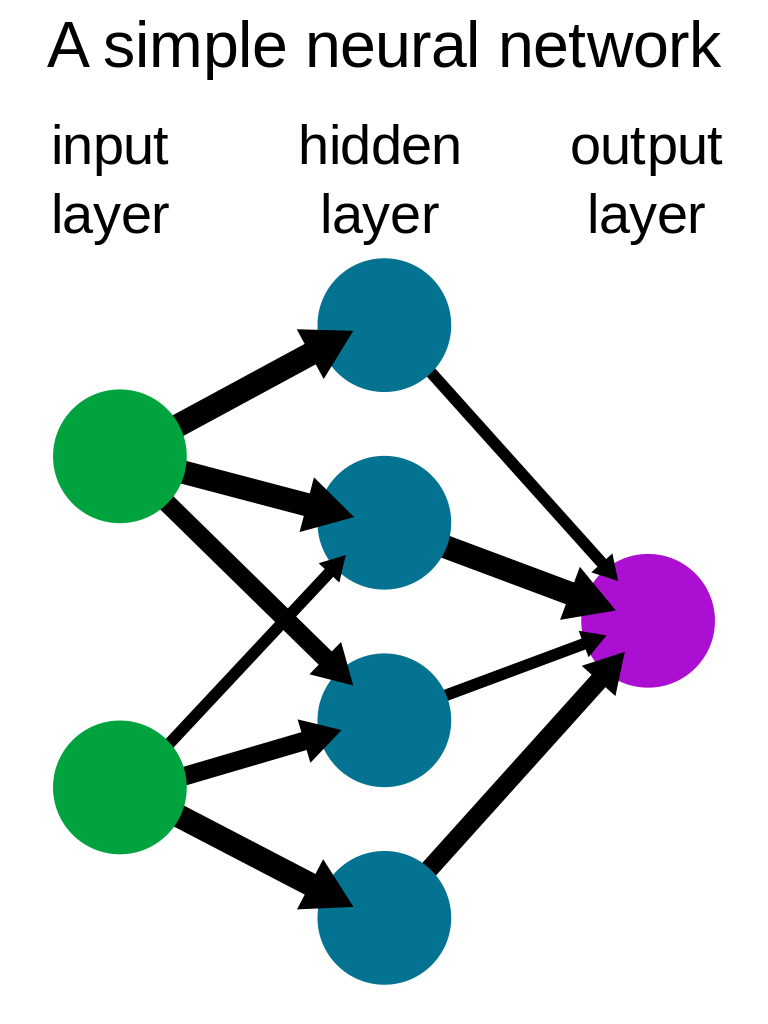

- Neural Networks are computational models inspired by the biological neural networks of the human brain.

- They consist of interconnected layers of neurons that process input data, learn patterns, and produce output.

Key Components of Neural Networks:

- Neurons: The basic computational units of a neural network that receive input, process it, and produce output.

- Weights: The adjustable parameters of a neural network that determine the strength of connections between neurons.

- Activation Functions: Non-linear functions that transform the output of a neuron, introducing non-linearity into the network.

Types of Neural Networks:

- Feedforward Neural Networks: Networks in which information flows in one direction, from input to output layers, with no loops or cycles.

- Recurrent Neural Networks (RNNs): Networks with loops, allowing information to persist across time steps, making them suitable for processing sequences of data.

- Convolutional Neural Networks (CNNs): Networks that use convolutional layers to process grid-like data, such as images or audio signals, effectively capturing local patterns.

Applications of Neural Networks:

- Image Recognition: Identifying objects, scenes, and activities in images.

- Natural Language Processing: Analyzing and generating human language.

- Reinforcement Learning: Training agents to learn optimal policies through interaction with an environment.

Challenges and Future Directions:

- Training deep neural networks with large numbers of layers known as the “vanishing gradient problem.”

- Designing efficient and scalable neural network architectures for various tasks and domains.

- Ensuring interpretability and explainability of neural network models, making their decision-making processes more transparent.

0 Comments